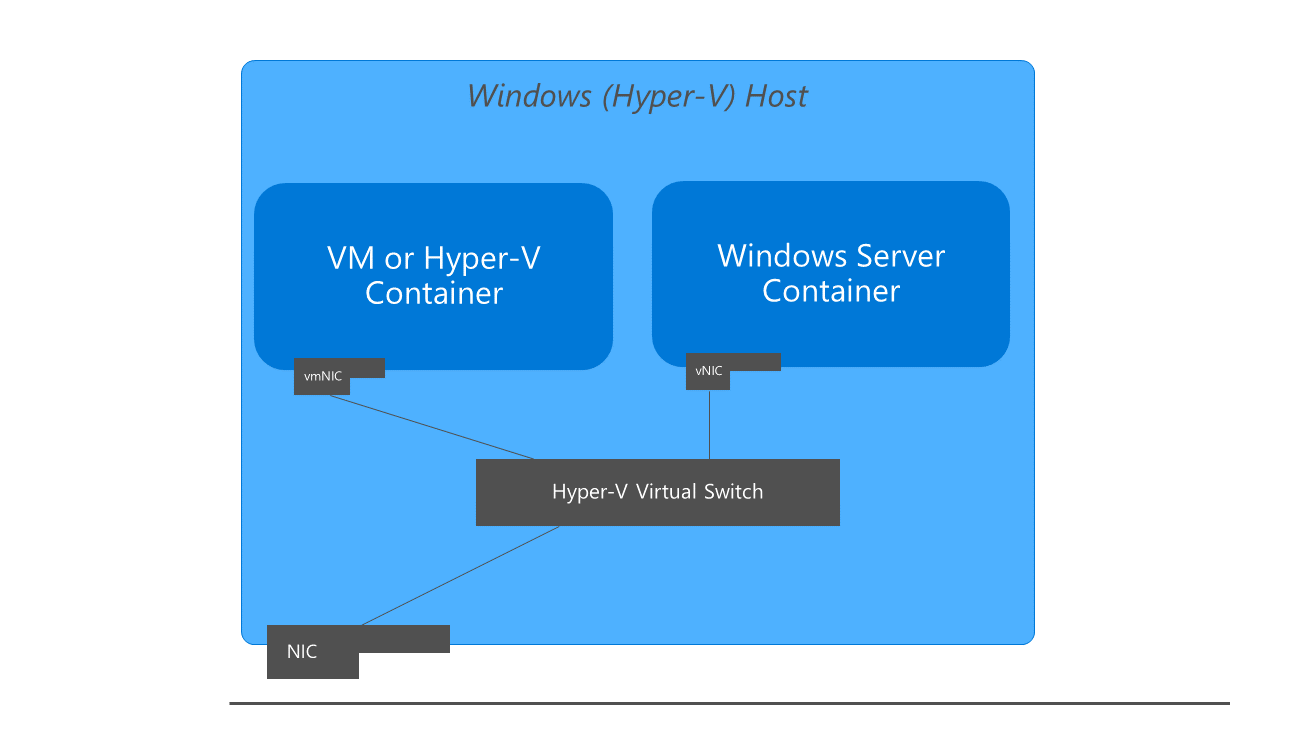

If this is something that is required maybe we need to look again at the feature and make it more of a standard feature - any more information on your use case would be let me know what your use case is and whether it's the same as the Couldn't guarantee a specific port is available on a testing node as another test set might be running as well using the same port (We run sets of tests in parallel).ī) What I meant is because we're using docker-compose, we couldn't be able to say 'use any available port on the host', hence why binding onto the localhost could be unreliable in this case.Ĭ) Multiple docker-compose sets are trying to spawn up the same services, using the same ports for different test sets.Īfter writing all this, I realised we could do fine with binding onto host, sometimes we might have to manually docker rm in order to free the ports up and remove test sets parallelism. There is also which may work depending on what you're trying to do exactly. We technically provide a DNS name you can use for this purpose: this is currently used by, as you can guess, kubernetes, allowing us to share the kube context on the host and inside containers. However, if you're looking for a solution that is 'developer only' (this won't necessarily work in production) and you are trying to standardise a mechanism for talking to containers either from a different container or on the host, you need to use a DNS name (not an IP address) as the DNS name will resolve to different IP addresses inside containers and on the host - we no longer have an IP address accessible by both the host and the container. Generally for container to container communication we have DNS names provided by docker compose for example.

Implementing something which allows you to access the host from a container AND use the same mechanism for container-to-container communication is not a best practice, because at scale the system often needs multiple hosts across a cluster, and so the hostname (and IP) will change.